You’re Not Mad at Facebook, You’re Mad at America

Photo by Drew Angerer/Getty Politics Features Facebook

This week, congressional intelligence committees held a public hearings about Russia’s efforts to spread disinformation through online platforms in the 2016 election. They were more like roasts than congressional hearings, and representatives from Facebook, Twitter, and Google were the guests of honor.

I can’t believe I’m about to type out this next phrase, but good for Congress. I’m mad at these companies, too. First of all, they’re responsible for the material on their sites. One hundred percent. And the sheer amount of personal data they have and the power that data provides them and others scares the hell out of me, as it does many Americans. To quote Senator Mark Warner from earlier this week, “Candidly, your companies know more about Americans, in many ways, than the United States government does.”

Warner is pointing out the core problem: These companies are literally years beyond government control.

It’s not that I think the tech giants are doing anything particularly malicious with their information (depending on how you define malicious); it’s that they don’t really know what they’re doing with it at all, who’s using it, how, or why. They don’t know their own strength (no one really does, most specifically including the government), and it seems like they don’t want to know. Maybe out of secrecy, maybe out of fear, because some of the people leveraging their information are indeed malicious.

Don’t just get mad at the symptom. Get mad at the cause.

In this sense I’m not mad at Facebook; I’m mad at America. Of course we can and should blame Facebook and other tech giants for what they allowed to happen during the election, but that’s myopic. Senator Marco Rubio, whose primary campaign was itself targeted by Russian hackers, unwittingly pointed this out when trying to deflect the issue from the effect on the election:

“These operations, they’re not limited to 2016 and not limited to the presidential race, and they continue to this day. They are much more widespread than one election.”

He’s right: This threat is serious and ongoing, and blaming and punishing Facebook, Twitter, Google, etc., won’t address the underlying problem, because the underlying problem is a defining element of America: Our apotheosis of capitalism and our fetishization of technology opened the door for the disinformation threat years ago.

I want to be clear here: I’m not defending Facebook in this piece. For part of it I’ll be presenting their side as fairly as I can, but not arguing in support of it. We can’t know truly what to do about a problem until we fully understand the problem, and right now we don’t fully understand the problem.

Speaking of…

What Russia Did

The fact that Rubio’s statement is correct doesn’t mean that Russia’s efforts didn’t have an effect on the election. They did. Facebook itself said in written congressional testimony that even though Russian ad buys were relatively small, they reached an estimated 126 Americans.

That’s not 126 million views. That’s 126 million people.

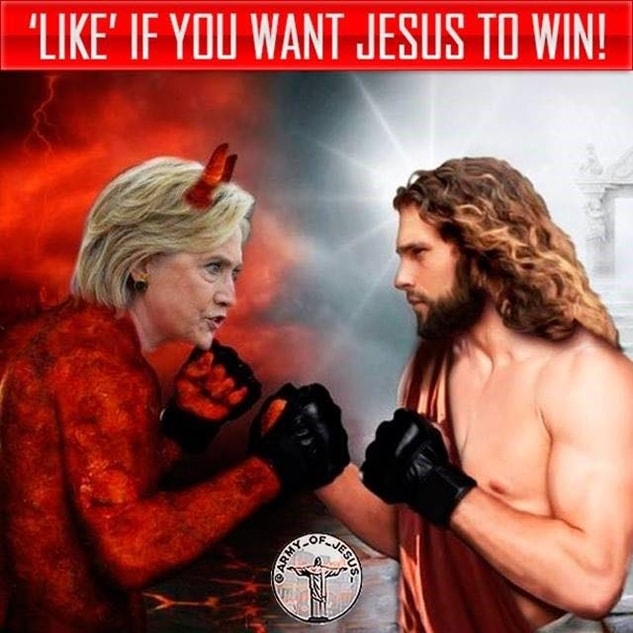

That’s an insane reach for what we know so far was only a minimal investment. Facebook has disclosed only 3,000 ads, and only a handful of those ads cost more than $1,000. This means those ads were highly targeted and outrageous, with high viral potential. Check this one out.

People with large followings (“influencers” in marketing speak) amplified this reach. These influencers included Michael Flynn, Donald Trump Jr., and Trump’s fucking campaign manager Kellyanne Conway, all of whom promoted Russian ads during the election.

(It’s odd that far as we know, Trump himself didn’t promote any of these ads directly. But when Facebook’s general counsel was asked this week under oath if President Trump was right when he said the Russian-purchased Facebook ads were a “hoax,” the attorney said, “No. The existence of those ads were on Facebook, and it was not a hoax.” File this under “plausible deniability” for use at a later date.)

Bottom line: Russia figured Facebook out: The platform doesn’t reward its advertising clients with reach based strictly on how much they pay. No, Russia knew, or intuited, that Facebook had long ago grown beyond the profit motive and evolved into an instrument of profit. These huge tech companies don’t care much about profits (they really don’t care) because they don’t need to: Like the “too big to fail banks,” they’ve become part of the very infrastructure of profit.

But if they’re such big shots, shouldn’t they have known?

Maybe?

Facebook certainly should have known last summer that Russians were buying ads, but not necessarily the Russian government. You’re probably not going to like this, but here’s Facebook’s side of the story.

I understand that Facebook is irretrievably biased and its spin shouldn’t be implicitly trusted, but that doesn’t mean we can write it off and still call ourselves intellectually honest—especially if you have no professional background in digital marketing. I do, and there’s some merit to Facebook’s story—though again, I’m not arguing to convince you of anything other than that fact. It’s worth understanding, because you’ll come up against philosophical and ethical dilemmas.

Facebook reported that Russian accounts spent $100,000 on about 3,000 ads over a two-year period, from 2015 to May 2017, a number that’s certain to rise, but in Facebook terms, that’s less than the salary of one entry-level programmer. Even if we increase that number by an order of magnitude, it’s only about 1/40,000 of Facebook’s advertising revenue over that same period. Should they reasonably not just detect this income, but be able to flag it? They have a responsibility to do so, but I’d say it’s pretty unreasonable of us to expect that they would or even could have in hindsight. It’s abominable that Facebook can’t understand their business, but at the size it is, how could you expect them to?

But there are other factors that their algorithms might have accounted for. They’re political ads, for one. Right?

Seems obvious enough. But technically, maybe not.

The Facebook ads (some paid for in freaking rubles) seem like they’d be a blatant violation of U.S. federal election law, but it’s not that simple. First of all, benign Russian companies and other entities buy Facebook ads (in rubles) all the time, just like other companies across the world would buy ads in their own currency. It’s fine.

What’s more, campaign ads weren’t subject to FEC regulations. Facebook managed to get the FEC to classify them as “small campaign items,” on the same level as buttons or bumper stickers, which means they didn’t need to disclose any ad buys to the FEC. Now there is a practical place to start overhauling regulations immediately.

But should Facebook have been able to identify these ads as “political” anyway? Facebook said in a blog post that most of the ads the Russians bought didn’t actually support specific candidates: “The ads and accounts appeared to focus on amplifying divisive social and political messages across the ideological spectrum.”

And in fact that’s one reason the Trump campaign would want to employ Russian attack ads: Anonymous Russians could say nasty, divisive things that Trump could not—about Muslims or immigrants for example. One such ad said, “They won’t take over our country if we don’t let them in.”

That doesn’t mention Trump or Clinton or Johnson or Farage or any politician. That’s a cultural appeal for an extreme platform targeted to someone predisposed to being accepting of that platform. And of course, Trump voters were far more susceptible to such messaging. Russians did the same thing from the other side, with Black Lives Matter ads.

So is that a political ad? How do you tell? What’s your criteria to distinguish what’s political versus religious or social? Can you write a forward-looking rule to make these distinctions, then confidently code for it in a way that doesn’t block certain messages by mistake, infringe on someone’s rights, or mistake their intent, waste investment in your company, and erode trust among your client base?

Next, the Russia-linked fake accounts. Facebook has taken down about 470 of them, but the official reason the company gave for the deletions wasn’t that they were political accounts, but that they were fake and violated the user agreement.

Senator Richard Burr, a Republican (who acknowledged, by the way, that despite the evidence that Russia’s disinformation campaign began well before the election, suggesting the bigger goal was to undermine American democracy, his committee concurs with the January, 2017, U.S. intelligence report that Russia was specifically trying to get Trump elected), asked Facebook’s general counsel if the platform would have shut down the accounts if they weren’t fake:

“Does it trouble you it took this committee to look at the authentic nature of the users and the content?”

The Facebook attorney dodged it: “The authenticity issue is the key,” he said, and added that the company would have shut down many of the accounts regardless of their political motives, because they violated Facebook’s terms of service.

What a freaking weenie.

What are we going to do about it?

We come to a bigger problem, though, when we consider what Rubio pointed out: Online disinformation isn’t limited to the election. Here’s Senator Dianne Feinstein (CA – D):

“What we’re talking about is a cataclysmic change. … What we’re talking about is a major foreign power with the sophistication and ability to involve themselves in a [U.S.] presidential election and sow conflict and discord all over this nation.”

All of us got taken for a ride last year. Of course, I don’t think all Americans—or even many of us—were bigly influenced by Russia’s efforts during the election. Most of us identified the BS for what it was, even if we weren’t fully aware of where it came from or why. But we all slept on just how damaging online disinformation and propaganda efforts can be—including the U.S. government, which for a number of reasons was slow to identify and act on Russian interference. And this week, members of Congress more or less admitted, though perhaps inadvertently, the government’s obvious and serious limitations when it comes to technology.

Senator Mark Warner, a Democrat from Virginia, told the corporate representatives that the Senate Intelligence Committee has been “frankly, blown off by the leaderships of your companies… Candidly, your companies know more about Americans, in many ways, than the United States government does. And the idea that you had no idea that any of this [foreign influence campaign] was happening strains my credibility.”

And here’s Feinstein again: “You’ve created these platforms, and now they are being misused. And you have to be the ones to do something about it, or we [Congress] will.”

There are the obvious questions here: What did the companies know; when did they know it; what could they and did they do about it; how neglectful were they, up to a level of complicity; etc. But these remarks also imply another question, which I’d phrase to Sen. Feinstein like this: “Well, what can Congress do?”

The Four Challenges

The regulation of information poses unique challenges that, despite Feinstein’s tough talk, the U.S. government simply isn’t prepared to take on. There are two major ones.

The first is our government’s lamentable technical inability to address the problem. This is different from similar historical moments, such as trust-busting, because Washington is years behind even comprehending what it is they’d be regulating, and this gap literally doubles every two years. This is maybe one reason we blame Facebook and Google for not regulating themselves: We instinctively understand it’s hopeless for anyone else to do the job.

(And think about that: We ask these companies to regulate themselves, then get upset when they don’t do a good job. Companies are biased to the bottom line. Even if they had the best of intentions, they still wouldn’t be fair actors—consciously or not.)

The second challenge for the government is its authority. We run into major and valid First Amendment concerns. How can you really say what’s “fake news?” What about writing that has some true stuff and some false stuff? What about parody? Hell, what about mistakes? Would you trust a third party to tell the difference between mistakes and intent to deceive and then go about censoring them? The press often can’t literally call Trump a liar, because they can’t truly know if he means to lie or if he’s just delusional.

Who is a journalist, by the way? Who isn’t a journalist?

This isn’t the first time a capitalist model designed to maximize profit has failed us socially, but it might be the first time such a failure is literally sanctioned by the Constitution.

The same problems apply to the other side: What can the tech companies do about what they can do?

First, the question of ability. You might reason that these companies created this technology, so they should be able to control it.

(Now who sounds naive?)

From the tech side it’s not so much “should” now as “could.” An extreme example: I imagine many of the people who knee-jerk believe tech giants should be able to exercise micro-control over their vast networks also fear the rise of artificial intelligence technology, and precisely because at some point tech companies won’t be able to control it. Well, for one, digital advertisers are already using AI to maximize the reach and personal impact of their ads.

The truth is that the reach of these platforms, and the speed of that reach, is unimaginable. Combine that with the difficulty of sorting out “gray” content, and the massive scope of the task becomes apparent. Of course, the companies could devise algorithms that flag the spread of certain content (measuring qualities such as outrage for example, and matching with origin, geography, etc). These algorithms obviously already exist, and they could be tuned further. For instance, Facebook has learned from the attack on the U.S., and shut down many thousands of accounts in the lead-up to French and German elections this year. This, however, brings to light a problem Americans are notorious for forgetting: The world is really really big. Tech giants must learn to account for political interference not just in the U.S., but across the globe.

Here’s Facebook’s “promise” to Congress:

“Going forward, we are making significant investments. We’re hiring more ad reviewers, doubling or more our security engineering efforts, putting in place tighter ad content restrictions, launching new tools to improve ad transparency, and requiring documentation from political ad buyers.”

But what kind of resources can and will Facebook, Twitter, and Google throw at the problem? What’s their profit incentive here? Their incentive is actually the opposite: Such an effort, and it’d have to be a massive one, would have to be almost purely altruistic. You might argue that there is a profit incentive: People will stop using these platforms if they find them dangerous or unreliable. This is true of most products, but not necessarily true of these companies, which don’t provide products at all, but provide the ways to provide products. They’ve insinuated themselves in industries worldwide, and their technology is concentrated, with such a head start and rate of growth, that where car companies could catch up with Ford or GM, it won’t be that easy with Facebook and Google.

Ah yes: Google

Google is in these hearings, too, because its platform was also exploited. But Google is a different animal. It pushes information differently than Facebook: Users look for specific information; they’re not blasted by random information. It’s harder for Google to regulate that service because vectors of disinformation are harder to discern and target. And its new “AMP” mobile news delivery levels the playing field, giving almost any site the veneer of legitimacy.

This is a much bigger problem.

Here’s a piece I wrote about a simple and perfectly legal way to exploit Google’s algorithms to sow massive amounts of targeted disinformation. I’d found a certain political white lie had gained popularity in right-wing circles, then noticed that right-wing media was clearly gaming Google results the way any digital marketer can inflate search rankings. This is totally legal, and it’s responsible for the fission in our information universes, in the two hemispheres of the American body politic. It also might be impossible to stop, for the same First Amendment concerns I pointed out earlier:

How can you really say what’s “fake news”? What’s real news? What about “gray” news, the in-between stuff? What’s a lie? Would you trust Google’s algorithms to tell the difference between mistakes and an intent to deceive and then censor possibly your own work?

And when you get into that gray area, who is the arbiter of truth?

I mean, how can you say something is illegal when it’s in the constitution?

There’s no good, ethical way to regulate the free flow of misinformation on these vast platforms: They’ve grown beyond capitalism to become the very infrastructure of capitalism itself. They have no skin in the game except possibly for concern for brand damage. No one but them can force them to do anything without also reaping major economic consequences.

Besides, who has the technological know-how to keep up with these platforms, especially now that they’re adapting A.I.?

If our solutions focus on solving the glaring and consequential technological problems of the recent past but don’t address these underlying issues that gave rise to those problems in the first place, this threat won’t go away, ever, and it will continue to divide you from your neighbor.

At the end of the day we have to recognize that Facebook is a business, one that built a platform of such a size, complexity, and power that the company itself couldn’t fathom its own vulnerabilities and contradictions. I’ve said this before, but Facebook is a geeky kid in high school P.E., concerned only with himself, basking in the brilliance of his own brain during free time, and he got pantsed.

That’s not to undersell the threat, but to do the opposite, to emphasize it: The normalcy is severe. During the election, Facebook was doing the same thing they do to us every day: Exercising capitalism to an irresponsible degree. If anything, that is what should scare you: It was business as usual.