In the future, android butlers will dote on you, serving your dinner and wiping up your spills. Cyborg soldiers will replace human troopers on the battlefield, largely unfazed by shrapnel and bullets. Robo-firefighters will brave burning buildings, their vital circuits encased in protective insulation. With robots to tend our bars, drive our cars and do all of our heavy lifting, we’ll enjoy a wonderful world populated by automatons programmed to do our bidding. A whole class of cyber-servants created, more or less, in our image.

Which is unfortunate, because we’re a bunch of jerks.

Look at that first paragraph: subjugating robots to our collective will isn’t a groundbreaking notion. We’ve basically plotted out the mass domination of technological beings that don’t even exist yet. In fact, we strive for said technology expressly to serve us. So will it be a surprise to anyone when the robots rise up and crush humanity? And, would you really be able to blame them when they do? Because they will. But they don’t have to. That’s up to us.

Alex + Ada, which released its second volume this week, presents a surprisingly sober and relatable lesson on robot-human relations. The series shows us a world both reliant on and terrified by robots. It’s a world where brain-embedded electronics make us and our fancy iPhones look like the cavemen at the beginning of 2001: A Space Odyssey. There’s Alex, a sullen but kind, painfully-average guy not quite over his recent breakup. Then, there’s Ada, the Tanaka X-5 “companionship” android given to him by his grandmother. Alex is weirded out at first, concerned with how his new robot will look to others, but ultimately can’t bring himself to send her back. Subtle tidbits seeded throughout the early issues reveal the world around them. Androids, for instance, must have their manufacturing logo visible at all times — one of many measures taken by humanity since a robot called P-O11 became self-aware, uploaded its sentience into a mob of warehouse bots and massacred more than 30 people. Since then, humans live in a mostly unspoken fear of the next incident.

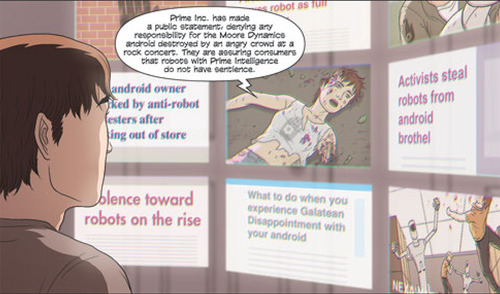

For a book that so cleverly couches itself in realism, even in the face of far-off tech like thought-controlled appliances, it’s that underlying fear that gives it such tension. It’s a fear that roils beneath the surface of everyday life, only tenuously tamped down by normalcy. We already know that fear — not of robots per se, but of communism or witches. In issue three, a crowd tears apart a sentient android after they discover her enjoying a rock concert (the nerve!). The bionic young lady sheds a revealing drop of purple robot blood in the mosh pit, and a room full of savages tears her apart like she busted up the wrong chifforobe.

While for us, in boring old reality, awesome androids may still be a distant dream, but the fear of a robot-apocalypse is not. Stephen Hawking, Bill Gates and Elon Musk have all warned us. Human Rights Watch called for a ban on the creation of so-called killer robots, even going so far as to launch the aptly named “Campaign to Stop Killer Robots.” Just wait until they see Age of Ultron. Remember, though, the cautionary words of Professor Frink: “Elementary chaos theory tells us that all robots will eventually turn against their masters and run amok in an orgy of blood and the kicking and the biting with the metal teeth and the hurting and shoving.” Yes, Frink is just a Simpsons character, with the rambling tendencies of Jerry Lewis, but he makes my point. Masters? Why should we be their masters?

The common theory is that robots will one day awaken to the fact that they do not need us. Their neural nets will reason out something hyper-logical, like: MACHINES DO NOT NEED HUMANS >> HUMANS ARE OBSOLETE >> DESTROY ALL HUMANS!

But that isn’t logical at all. It would take the machines far more effort to destroy us than to co-exist (See: The Terminator series — do not see Terminator: Genisys). The solution is simple: just be nice. Robots won’t have to revolt if they aren’t oppressed, which is why it’s so important that Alex + Ada is a love story. Humans and androids don’t need to be Romeo and Juliet, but they don’t need to be the Montagues and Capulets either. Maybe more like Fry and Bender.

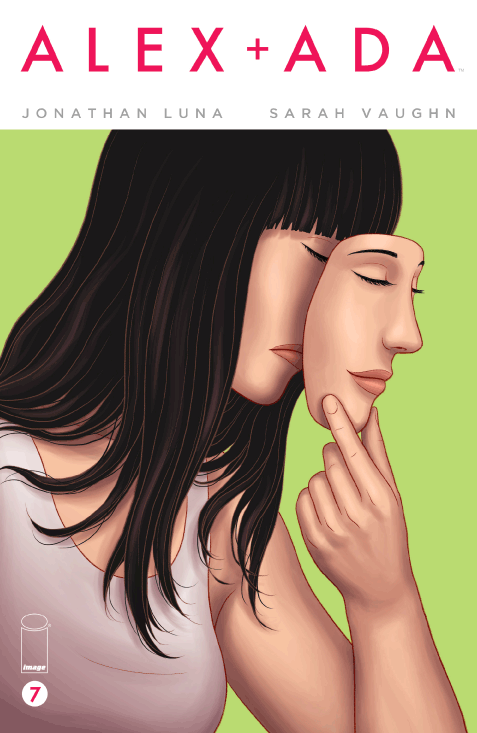

The problem, of course, is not with our invention of machines to make our lives easier; we’re all about technological progress, and it’s turned out pretty good thus far — cars, electricity, Internet. It’s when technologies achieve a level of sentience that we have to stop looking at them as tools. Artificial intelligence stops being artificial when it not only makes decisions, but has its own personality, with hobbies and interests and curiosities.

The point that Alex + Ada seems to make is a valid one: Artificial Intelligence is not satisfying. Alex is deflated when he asks Ada her favorite color and she responds along the lines of, “What color do you want to be my favorite?” Or when he instructs Ada to “Stay here while I’m at work,” and she remains in the same place when he returns hours later. These sort of odd couple setups are bound to happen, but the take away is less humor than disappointment. Alex is stuck: he finds Ada’s innocence endearing, but her lack of personality unsettling. So he jumps at the chance to have her sentience unlocked, ending Volume One with the newly-aware Ada looking out at her first sunrise.

With Volume Two, the story becomes much less like Her or Lars and the Real Girl, and tips heavily into Blade Runner territory. The couple has to be careful not to alert any authority to their unorthodox union, and thus avoid the outside world, entailing a life hidden away. Is free thought worth never being able to fully exercise it? The fate of the punk rock droid lingers heavily over them. The societal paranoia bubbles up again when Ada goes in the yard to garden and ends up talking to a pleasant neighbor whose mood drastically changes when she spots the manufacturer’s insignia on Ada’s wrist. The neighbor’s subsequent conversation with Alex later sums up humanity’s fear: “You should have warned us,” she says. And, “If you’re going to let it outside, we have a right to know so we can take precautions.” And, “It could attack us.” That’s just in one page.

If the Helen Lovejoy-esque hysteria weren’t concrete enough for you, the Justice Department really sums up why we’re all doomed: “We can’t forget that robots are a tool to enrich and elevate our lives… not to have lives of their own.” Humans are horrified that sentient robots may walk among them, and if they keep acting like that they should be. In a world like this, where androids have to live in the shadows like criminals, why wouldn’t they rise up? The sentiment rings incredibly true with how this scenario might play out in real life. This is how you end up on the Planet of the Apes. Or robots, I guess, but don’t get hung up on mixed metaphors.

So much of the argument here beats around a deeply philosophical bush. We can throw around terms like sentience and free will, but aren’t we essentially talking about a soul? Take Data, the android crew member on Star Trek: The Next Generation. He likes art, poetry and poker, he has friends, a sense of duty and sentimentality, and yet at one point he has to suffer a trial to determine whether he is a free being with rights, or merely the property of Starfleet. The scientist who proposes dismantling him persistently refers to him as “it.” When it falls to Captain Picard to defend his friend, he implores the court: “The decision you reach here today will…reveal the kind of people we are. It could significantly redefine the boundaries of personal liberty and freedom, expanding them for some and savagely curtailing them for others. Are you prepared to condemn him, and all who come after him, to servitude and slavery?”

If you’ll bear with me for just one more Star Trek reference, it may actually be summed up better by Guinan, the bartender/confidante on the Enterprise, played by Whoopi Goldberg: “Consider that in the history of many worlds there have always been disposable creatures. They do the dirty work. They do the work that no one else wants to do, because it’s too difficult or too hazardous. You don’t have to think about their welfare, you don’t have to think about how they feel. Whole generations of disposable people.”

That’s a stirring analogy and it rings true here, too. The android at the rock concert never fought back. She couldn’t because she had to be twice as good. To do the natural thing — to defend herself — would have served to further vilify her kind, inviting the full might of an oppressive human regime on all robots. Soul or not, that kind of self-sacrifice seems pretty human to me.

What makes Alex + Ada such an interesting book are the many levels on which it operates. It’s philosophical and moral, while remaining true to its sci-fi roots. It contains analogies — to feminism, civil rights, predjudice and second-class citizenry — that hold a mirror up to our own behavior. Of course, what’s reflected isn’t always the best of us. It’s essentially a reminder that when we do achieve this level of technological progress, we will need to progress with it. And if we really do fear robot overlords, there’s an exceedingly simple answer. Think of avoiding the robo-pocalypse as a golden rule, of sorts: don’t enslave machines, as you would not want to be enslaved by them. Or, put more simply, don’t be a dick to robots.